From Pavlov's Dogs to Robots with Manners: An Entertaining Guide to Reinforcement Learning

Have you ever played a game that seemed impossible to beat? You try and try, but you always end up losing. Maybe you start to notice some patterns and figure out some strategies to improve your chances. This is essentially what reinforcement learning is all about.

We all know the famous experiment where Pavlov conditioned dogs to salivate at the sound of a bell. But what does this have to do with reinforcement learning (RL)? Well, it turns out that RL draws inspiration from the fundamental principles observed in Pavlov’s classical conditioning.

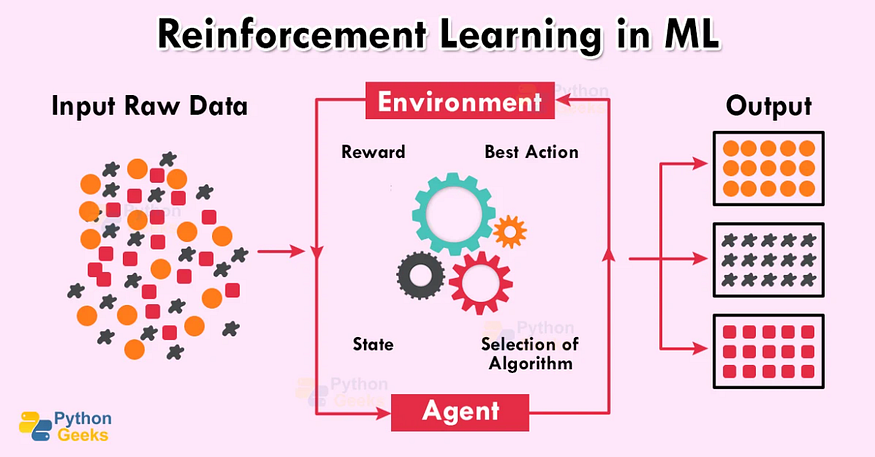

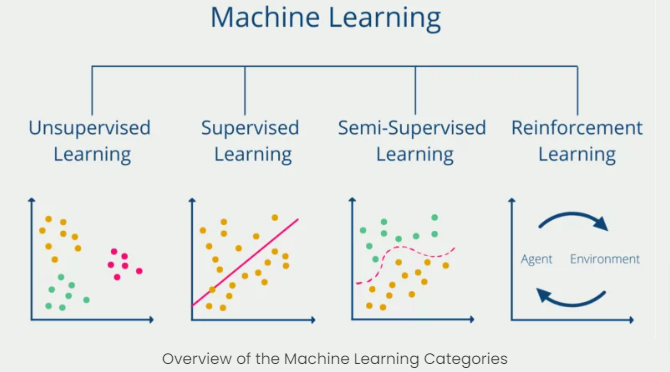

Reinforcement learning (RL) is a subfield of machine learning that focuses on training agents to make decisions in an uncertain, dynamic environment. In other words, it’s about teaching machines to learn from their experiences in the world and adjust their behaviour accordingly. RL has been used to teach robots how to navigate complex environments, to develop intelligent agents for playing games, and even to optimize ad placement strategies for online advertising.

The Markov Decision Process

At the heart of reinforcement learning is the concept of the Markov Decision Process (MDP), which provides a mathematical framework for modelling decision-making problems. An MDP consists of a set of states, actions, rewards, and a transition function that describes how the environment changes when an action is taken. The goal of an agent in an MDP is to learn a policy that maps each state to an action that maximizes the cumulative reward.

Just like Pavlov’s dogs, these agents learn to associate actions with rewards or punishments, gradually adapting their behaviour to maximize the desired outcomes.

Key Challenges

Exploration vs. Exploitation

One of the key challenges in RL is the exploration-exploitation tradeoff. The agent needs to explore the environment to learn about it, but it also needs to exploit its current knowledge to make decisions that lead to high rewards. Balancing these two objectives can be difficult, as too much exploration can lead to wasted effort, while too much exploitation can lead to suboptimal performance.

The Curse of Dimensionality

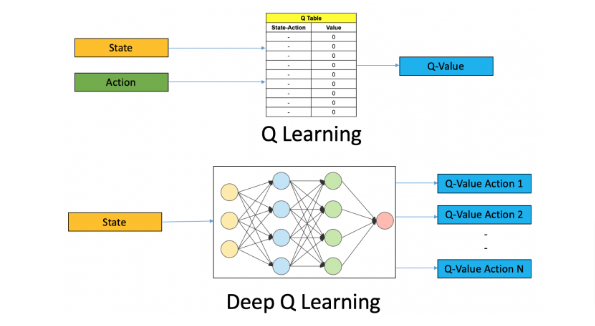

Another challenge in RL is the curse of dimensionality. As the number of states and actions grows, the space of possible policies becomes exponentially larger, making it difficult to find an optimal policy. To overcome this challenge, researchers have developed techniques like value iteration, policy iteration, and Q-learning, which can efficiently approximate the optimal policy without exploring every possible option.

Learning Through Trial and Error

One of the key advantages of reinforcement learning is its ability to learn through trial and error. The agent can explore the environment and learn from its mistakes, gradually improving its policy over time. This makes it particularly useful for complex tasks that are difficult to program manually, such as playing games or navigating complex environments.

Types of RL Methods

Reinforcement learning algorithms are typically divided into two categories:

- Value-based methods involve estimating the value of each state or state-action pair and selecting actions that maximize this value.

- Policy-based methods directly optimize the policy by searching for the actions that maximize the cumulative reward.

There are also hybrid methods that combine elements of both approaches. In addition, there are techniques like Q-learning, actor-critic methods, and deep reinforcement learning that leverage neural networks and other advanced machine-learning techniques to improve agent performance.

Real-World Applications

Despite the challenges, RL has shown great promise in a wide range of applications:

- DeepMind’s AlphaGo famously used RL to learn to play the complex game of Go at a superhuman level.

- Autonomous vehicles can navigate complex urban environments using RL.

- Data centers use RL to optimize energy consumption.

- Robotics — RL has become so popular in robotics that it has spawned its own subfield, known as robot learning.

The Future

So, will RL replace human decision-making entirely? Not likely. While RL has shown great promise, it is still limited by the quality and quantity of data available for training, as well as the complexity of the environment being modeled. Additionally, RL algorithms can be difficult to interpret, which can be a concern in safety-critical applications.

That said, RL is an incredibly powerful tool for navigating an unpredictable world. It allows machines to learn from their experiences and adapt to changing circumstances, just like humans do. And who knows, maybe one day we’ll even see robots playing Go alongside the world’s best human players.